“BY bringing everyone online, we’ll not only improve billions of lives, but we’ll also improve our own as we benefit from the ideas and productivity they contribute to the world.”

It all sounded so positive; declaring internet access a ‘human right’ and pledging to make it available, free of charge, to billions of people in the developing world.

In August 2013, this was the stated aim of Facebook boss Mark Zuckerberg, who made the human right declaration in a document outlining his company’s aim to connect the world.

The company had already launched Facebook Zero in 2010, offering people free access to Facebook – and what followed in 2013 was the release of internet.org and the Free Basics app.

Free Basics, as the name suggests, when installed on a smartphone, provided data for free to enable the user to access the internet.

‘Wow’, I hear you scream, ‘What a generous thing to do! The Zuck really meant what he said!’.

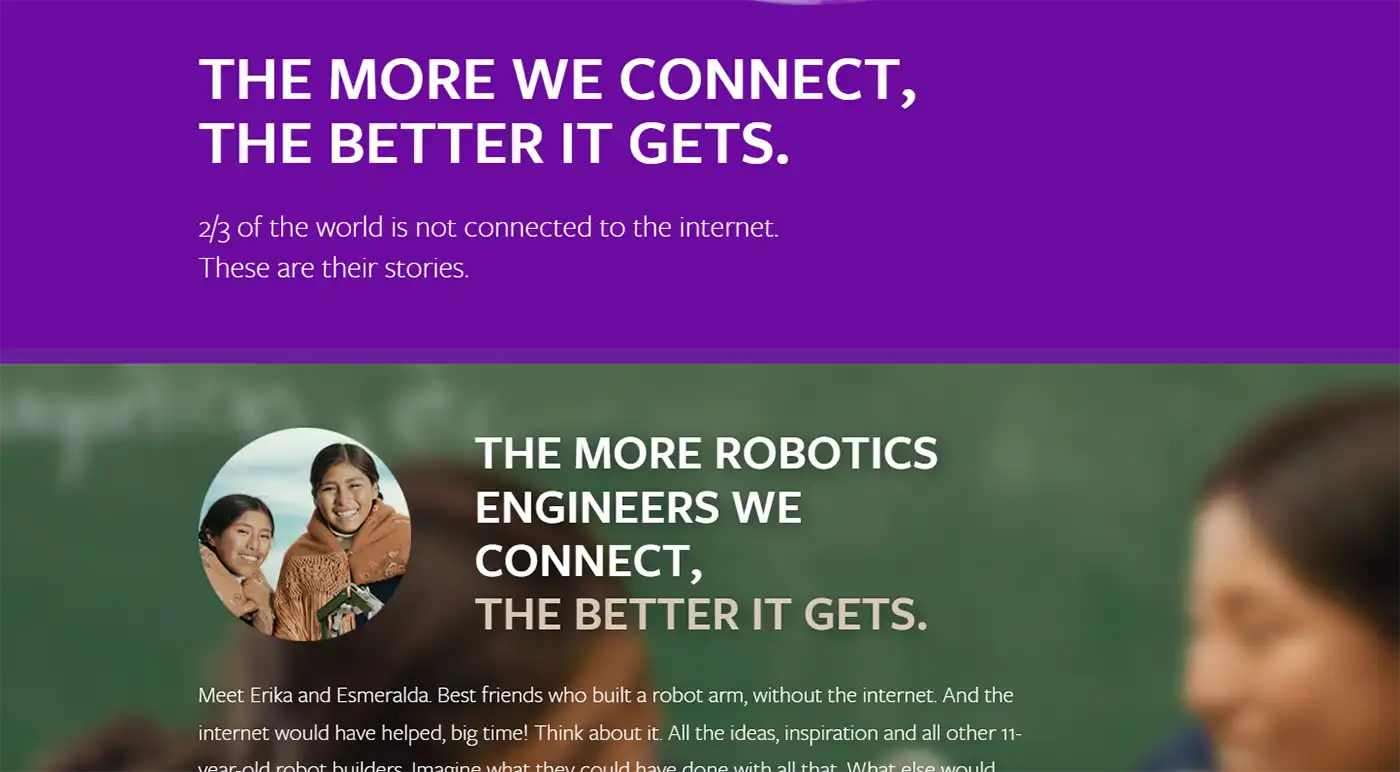

‘The more we connect, the better it gets’, screamed the Internet.org website – launched alongside the 2015 announcement.

The Internet.org homepage in 2015. Picture: Wayback Machine

There was, however, a catch; People could not access all the internet has to offer.

It turned out Free Basics allowed people to access a very, very limited part of the internet – namely a select few, low-data websites permitted by the app’s creators, primarily of course, Facebook.

So, when they got hold of a phone and Free Basics, users in certain parts of the world excitedly got their first look at the internet. What they saw, was Facebook.

Basically, Free Basics made many people believe Facebook is the internet, and the internet is Facebook. Indeed, in 2012, it was believed free access to Facebook was behind a surge in internet usage. In the Philippines, for example, one advertising executive at the time spoke of how, “Facebook is literally becoming the internet”.

According to Nielsen Research, by the end of 2011, the number of people with internet access in the Philippines stood at 33.6 million. The number of Facebook users in 2012 stood at 29.4 million. Facebook Zero worked. And Free Basics did too.

So, connecting millions or billions of people to the internet was an astute business move for Mr Zuckerberg and his ever-filling Facebook – something he didn’t mention when declaring it a ‘human right’.

But to be fair, he did hint at it, saying how – even before they were actually connected – people’s notion of ‘the internet’ and ‘Facebook’ were interchangeable.

“Even when they can afford it, many people who have never experienced the internet don’t know what a data plan is or why they’d want one,” Zuckerberg said. “However, most people have heard of services like Facebook and messaging and they want access to them.

“If we can provide people with access to these services, then they’ll discover other content they want and begin to use and understand the broader internet.”

So, giving folks access to Facebook and WhatsApp is for the greater good, because they will inevitably progress to exploring more of the internet and the world will benefit. And if they don’t, well, Facebook will do alright out of it…

Now, dear reader, we are not a naïve bunch, we know how the corporate world works, right?

Facebook was getting something – a lot – out of this, of course, including huge, unrivalled access to potentially billions of new users – and the resultant income.

Through Free Basics and Facebook Zero, the company received a captive, restricted audience reliant on their services, plus access to these people’s data – perhaps the most valuable commodity in the technological age.

But still, you may see this as a worthwhile trade-off in connecting billions of people to the information superhighway (or as I’ve come to know it, the misinformation slippery slope).

Meanwhile, Free Basics continued to rollout – remember, Facebook was ‘literally becoming the internet’ in 2012.

But in 2016, it hit a snag.

As we’ve discussed, Free Basics, far from providing an open door to the online world, in actuality gave people a glimpse into a carefully managed set of websites – and Facebook, of course. Always Facebook.

For many of us, this brought the notion of net neutrality into view for the first time.

In brief, net neutrality is the concept that the entire internet is there for everyone; All internet traffic should be treated equally and no one should be allowed to restrict access for their own means.

Like, I dunno, making Facebook free to access, but not Google for example (as Free Basics did).

The conflict between a ‘human right to Facebook’ and net neutrality came to a head in India in 2016.

India is a huge, huge market, obviously, so Facebook was not keen on anything that might restrict the rollout of Free Basics to billions of potential customers and fought the possibility of restrictions all the way.

However, in a rare turn of events, Facebook lost.

The Telecom Regulatory Authority of India (TRAI) introduced rules that favoured net neutrality, and stipulated “no service provider can offer or charge discriminatory tariffs for data services on the basis of content”. Facebook was rumbled, and Free Basics lost the Indian market.

Controversy ensued when, reacting to the news in a now-deleted tweet, Facebook board member Marc Andreessen (billionaire investor and co-founder of Netscape back in the day) may have spoken the quiet part out load when he wrote: “Anti-colonialism has been economically catastrophic for the Indian people for decades. Why stop now?”.

So, was he saying Free Basics was ‘colonialism’? Certainly sounded like it, and it’s easy to argue the service looked to colonise the internet for Facebook.

Not a good look, I’d argue.

Mr Zuckerberg disavowed the comments, saying he found them “deeply upsetting” and they did not “represent the way Facebook or I think at all”.

That’s good then. Facebook is not a colonialist power. Got it.

Anyway, since then, coverage of the debate over net neutrality and Free Basics itself has diminished – certainly in any mass media – which Facebook may well be delighted with.

But despite the setback in India, Free Basics was still going – and growing.

As of 2019, it was reported to be available in 65 countries, including 30 African nations. In 2016, Facebook itself touted how Free Basics had connected 40 million people, and in 2018, that number was reported to have hit almost 100 million.

It has been doing very well, thank you very much…

Mark Zuckerberg meets President Donald Trump at the White House in 2019. Picture: The White House

Jump ahead to 2025 and another announcement by Mr Zuckerberg.

This time, the heady goals of opening the internet to the world’s poor in a bid to allow them to express their ideas and creativity were notably absent.

No, this announcement had an altogether more sombre tone, though it was spun to try and present some sort of lofty and worthy ambition.

Zuckerberg appeared in a to-camera piece to explain how his company was to “end the current third-party fact-checking program in the United States”.

Now, just how much of a fact-checking program there has ever been on Facebook is up for debate (spoiler alert, it’s nowhere near as big as you think it is), but this was a full and frank policy of scrapping any attempt at monitoring what people post.

“The intention of the program was to have these independent experts give people more information about the things they see online, particularly viral hoaxes, so they were able to judge for themselves what they saw and read,” the company said.

“That’s not the way things played out, especially in the United States. Experts, like everyone else, have their own biases and perspectives. This showed up in the choices some made about what to fact check and how.

“Over time, we ended up with too much content being fact checked that people would understand to be legitimate political speech and debate. Our system then attached real consequences in the form of intrusive labels and reduced distribution. A program intended to inform too often became a tool to censor.”

Did it? Did it really?

Facebook went on to say how it had been “over-enforcing our rules”, with content removed incorrectly around 20% of the time (not including “large-scale adversarial spam attacks”).

As a result, fact-checking would effectively be scrapped, replaced with a ‘community notes’-style system seen on Twitter (now X).

However, one thing the firm claimed was that, for example, in December 2024, it removed “millions of pieces of content every day”.

That’s a lot of content, regardless of what Facebook says. And it’s a lot of misinformation, or misleading information, or a lot of threats, videos of violence, abuse, or more.

If we’re being very conservative (small-c), accepting one million items were removed each day, even with a 20% error rate, it means 800,000 were removed because they breached Facebook’s rules. That’s a lot of potentially hateful, abusive, racist or misleading information that won’t be seen.

Now, however, it looks like they will not be removed, instead left at the mercy of other Facebook users, who might put a note on something suggesting it’s, well, you know, dangerous, or abusive.

Sounds fair, hey? And I’m sure leaving the decision up to people on Facebook and having no real moderation will work and nothing really bad will happen.

How bad could it be, right? Spoiler alert, it can be bad. Very bad.

More than 700,000 people fled Myanmar after an ‘ethnic cleansing’ began. Picture: UNHCR/Roger Arnold

In August 2017, security forces in the Asian country of Myanmar embarked on a brutal campaign of ethnic cleansing. Their targets were Rohingya Muslims, who largely lived in the west of the country.

They’ve had a tough time. And, starting around 2012, things got worse.

Violence erupted in Rohingya areas, with many killed and thousands displaced. By 2017, Amnesty estimates around 127,000 Rohingya were confined to refugee camps after fleeing the violence or having their homes destroyed.

In August of that year a group of armed Rohingya, known as the Arakan Rohingya Salvation Army (ARSA), responded with attacks on a number of military and security services targets.

What followed was nothing short of an ethnic cleansing of the Rohingya, with Myanmar’s security services – led by the army – attacking Rohingya villages and people.

In the months that followed, again according to Amnesty, more than 700,000 people fled to neighbouring Bangladesh to avoid “relentless and systematic” “clearance operations” which saw thousands of Rohingya people killed, raped, and tortured.

Mohamed, 20, told Amnesty how “they looted everything … then they burned down our houses”.

He and his family set off on a 122-day walk to find some sort of safety in Bangladesh. But the journey was not safe, with refugees coming under regular attack.

Mohamed said he saw both of his grandfathers killed, including one who was 70 (“They killed and burned my grandfather; we never saw his face again”). His other grandfather was “killed by a bullet to the brain”.

But what does any of this have to do with Facebook?

Well, until the early 2010s (twenteens?), Myanmar was an isolated country. At the end of 2013, it is reported fewer than 13% of people had a mobile phone, and around 1% had access to the internet.

This all changed in 2014, when a nationwide mobile network was built. The cost of owning a mobile phone plummeted, and people snapped up the chance to get online.

In response to the opening up of a new market, companies pounced, and one became dominant. Facebook.

In a hark back to the Philippines, the Asia Society described how Facebook became, “to all intents and purposes, the internet in Myanmar”.

In 2015, elections in the newly-uninhibited Myanmar saw a democratic party achieve a historic victory, gaining control of the government, with leader Aung San Suu Kyi even heralding the impact of the internet on exposing corruption and uniting activists.

But there was a dark side to this ‘progress’.

In 2016, Facebook rolled out Free Basics in Myanmar as take-up of the internet continued to increase.

By this point, a single website reportedly accounted for 85% of all internet traffic in Myanmar. That site was Facebook, with an estimated 10 million users.

The following year, the ethnic cleansing began.

The role of social media in the atrocities – which as we’ve learned for Myanmar meant Facebook – soon became an issue, with the likes of Ashin Wirathu, a Buddhist monk once labelled ‘the Buddhist bin Laden’ among those praising its impact.

“Social media is much better than using town criers,” he told Wired. “If I give a sermon, even people who cannot attend it can hear my message. Now I have thousands of followers.”

Investigations showed Facebook was rife with anti-Rohingya propaganda, stirring racial hatred.

Myanmar lacked an established free press to counter it, as well as politicians and activists happy to bolster false claims, branding any push back as itself, ‘fake news’.

In a paper entitled Reconciling Expectations With Reality in a Transitioning Myanmar, Debra Eisenman, MD of the Asia Society Policy Institute, wrote: “People carried the conversation on social media and ‘alternative facts’ became real violence.”

The chair of a fact-finding mission to Myanmar said Facebook had “turned into a beast” and blamed it for “worsening the country’s violence”.

And Free Basics played its part.

A teacher in the country told Amnesty how despite government repression, in the west of Myanmar, the Rohingya had managed to exist peacefully – until 2012, when Facebook exploded.

“We used to live together peacefully alongside the other ethnic groups in Myanmar,” they said. “Their intentions were good to the Rohingya, but the government was against us. The public used to follow their religious leaders, so when the religious leaders and government started spreading hate speech on Facebook, the minds of the people changed.”

Religious leaders like ‘the Buddhist bin laden’.

The post-internet era in Myanmar saw an increasing amount of violence against the Rohingya, including incidents directly linked to social media.

In July 2014, an online rumour claiming two Muslim tea shop owners had raped a Buddhist employee were shared by another Buddhist monk – U Wirathu – who said “Mafia flame (of the Muslims) is spreading”, and that “all Burmans must be ready”.

Violence ensued, with two reported dead and 14 injured.

U Wirathu himself, in 2016, ascribed the effectiveness of Facebook in spreading his message, a message that often spread hate, falsehoods and sowed division.

“If the internet had not come … not many people would know my opinion and messages like now,” he told Buzzfeed News. The internet, he said, was a “faster way to spread the messages”.

As if acknowledging the role of Facebook in the incident in 2014, authorities blocked the site in some areas for a time.

“We must fight them the way Hitler did the Jews, damn kalars!,” said one.

“These non-human kalar dogs, the Bengalis, are killing and destroying our land, our water and our ethnic people,” said another.

Posts spiked before, during and after violent incidents, including in the build-up to the ‘clearance operations’ of ethnic cleansing.

Numerous studies have gone on to highlight the impact of the internet – which was Facebook – in Myanmar as having an impact on violence against the Rohingya.

So what did Facebook say about it all?

Like any good social media platform (should such a thing exist), Facebook has rules over content. They include the company’s ‘community standards’, which inform users how “hate speech” is not allowed and “creates an environment of intimidation and exclusion, and in some cases may promote offline violence”.

It may indeed.

But who enforces these standards? Who monitors content and decides if it breaks the rules?

Facebook says it uses a combination of human and AI means to enforce standards. So you would expect a company entering a market of millions to install means of enforcement in that market ahead of time, wouldn’t you?

Turns out, this is not the case. In 2018, four years after a Facebook-amplified rumour caused violence in Myanmar, Mark Zuckerberg was summoned to appear before the US Senate’s Commerce Committee and Senate Judiciary Committee.

Myanmar came up.

Mark Zuckerberg appears before Senators in the US in 2018

Senator Patrick Leahy, a Democrat, told Zuckerberg how, “six months ago, I asked your general counsel about Facebook’s role as a breeding ground for hate speech against Rohingya refugees. Recently, UN (United Nations) investigators blamed Facebook for playing a role in inciting possible genocide in Myanmar. And there has been genocide there”.

He pointed out hateful content which called “for the death of a Muslim journalist”.

“Now, that threat went straight through your detection systems, it spread very quickly, and then it took attempt after attempt after attempt, and the involvement of civil society groups, to get you to remove it,” he said.

“Why couldn’t it be removed within 24 hours?”

What Mr Zuckerberg said was revealing.

He said: “We’re working on this. And there are three specific things that we’re doing.

“One is we’re hiring dozens of more Burmese-language content reviewers, because hate speech is very language-specific. It’s hard to do it without people who speak the local language, and we need to ramp up our effort there dramatically.

“Second is we’re working with civil society in Myanmar to identify specific hate figures so we can take down their accounts, rather than specific pieces of content.

“And third is we’re standing up a product team to do specific product changes in Myanmar and other countries that may have similar issues in the future to prevent this from happening.”

Zuckerberg confirmed, in 2018, the company was still, years after the problem was highlighted, not sufficiently staffed to deal with it.

Equally, “dozens” may sound a lot when you’re talking about how many sweets you ate on Christmas day, but to oversee content from millions of Facebook users in a country rife with the potential for ethnic conflict? It is, effectively, nothing, I would suggest.

Earlier that year, in March, a Facebook employee had described how fact-checking in Myanmar was difficult, as there were no third-party fact checkers to work with.

Maybe hold off on the rollout, or employ your own, then?

But Facebook had no office in Myanmar, let alone anyone on the ground, who spoke the language. It was an “absentee landlord”, according to deputy director of Human Rights Watch in Asia, Phil Robertson.

Millions of people were signing up to Facebook in Myanmar, rewarding the company with advertising revenue and data wealth, but it did nothing.

It has even been alleged the company’s algorithm, the program which decides what people see in their news feed, amplified much of the inflammatory content – because it gets engagement.

In Sri Lanka, one government official said Facebook “look at us only as markets … (But) we’re a society, we’re not just a market”.

A 2018 independent report – commissioned by the company – concluded that “Facebook has become a means for those seeking to spread hate and cause harm, and posts have been linked to offline violence”.

In 2021, a lawsuit filed in the US by the Rohingya laid out their view clearly.

It said the company was “willing to trade the lives of the Rohingya people for better market penetration in a small country in south-east Asia”.

Facebook continues to claim it is improving things in the country – and accounts have been banned, posts have been removed.

But did it come soon enough? Is it good enough? And why did it take thousands of deaths and the displacement of hundreds of thousands of people to make it happen?

It later emerged that in September 2017, Facebook quietly removed Free Basics from Myanmar.

Facebook, it could be argued, was able to slip in to a country, ensnare much of its population in its algorithm, contribute to countless deaths through an ethnic cleansing, and then withdraw what little it was offering for free. All without opening an office.

Mark Zuckerberg makes his fact-checking announcement in January 2025. Picture: Facebook

Back to 2025, and Mr Zuckerberg’s announcing an end to what little fact-checking existed at Facebook in the US (the actual fact checking that goes on is a whole other story).

The throwing out of any pretence at moderation will see what little protection people have against misinformation, lies, hate and potentially violent rhetoric, go with it.

Despite their best efforts at presenting otherwise, Mark Zuckerberg and his Facebook have shown scant interest in protecting users.

Facebook’s track record is of being interested in one thing, colonising the online world for growth and profit – and maybe, somewhere in the mix, to actually do some good.

And as board member Marc Andreessen said, “Anti-colonialism has been economically catastrophic”… And to Facebook, it appears, economic success is what matters.

‘The more we connect, the better it gets’ indeed.

PAUL JONES

Editor in Chief

NOTES & SOURCES:

As usual, I have done my best to link to sources for data presented in this piece, so readers can find out more, should they wish, but here are some of them – and others – worth reading. And as usual, should you wish to get in touch, please do – paul@blackmorevale.net – but please keep it civil!

- Facebook has a whole section devoted to Mr Zuckerberg’s claim the internet is a ‘human right’, which you can read HERE.

- And his 2025 announcement can be read and watched HERE

- Amnesty International’s report – The social atrocity: Meta and the right to remedy for the Rohingya – can be read in full HERE

- A full transcript of Mark Zuckerberg’s testimony to the US Senate Judiciary Committee in 2018 can be read HERE

- The Reconciling Expectations With Reality in a Transitioning Myanmar paper can be found in full HERE

READ MORE:

- OPINION: Introducing Joshua N Haldeman, Technocracy and a frightening idea…

- OPINION: Do our dogs actually love us? Really?

- OPINION: Climate change, hysteria, denial, and a 70-year-old warning

- OPINION: Petitions, petitions – and why snowflakes need to get over it

- OPINION: My idea to solve our prison and employment crises

Leave a Reply